The explosive growth of AI chatbots, exemplified by ChatGPT’s unprecedented adoption of 100 million monthly users just two months post-launch in January 2023, and its subsequent surge to 700 million weekly active users by mid-2025, has brought both transformative benefits and significant challenges to the forefront.

As these AI tools become ubiquitous in daily life and enterprise operations—with 67% of Fortune 500 companies implementing AI chatbots by 2025 and the global AI chatbot market forecast to reach $2.8 billion in the same year —a cascade of questions regarding data privacy, security, and usage has emerged.

This section addresses the most common and critical questions surrounding AI chatbot conversation archives, drawing heavily on the latest research findings to provide clear, comprehensive, and authoritative answers.

We delve into how user data is handled, the inherent privacy risks, the regulatory landscape, and practical measures users and organizations can take to mitigate concerns and harness the benefits responsibly.

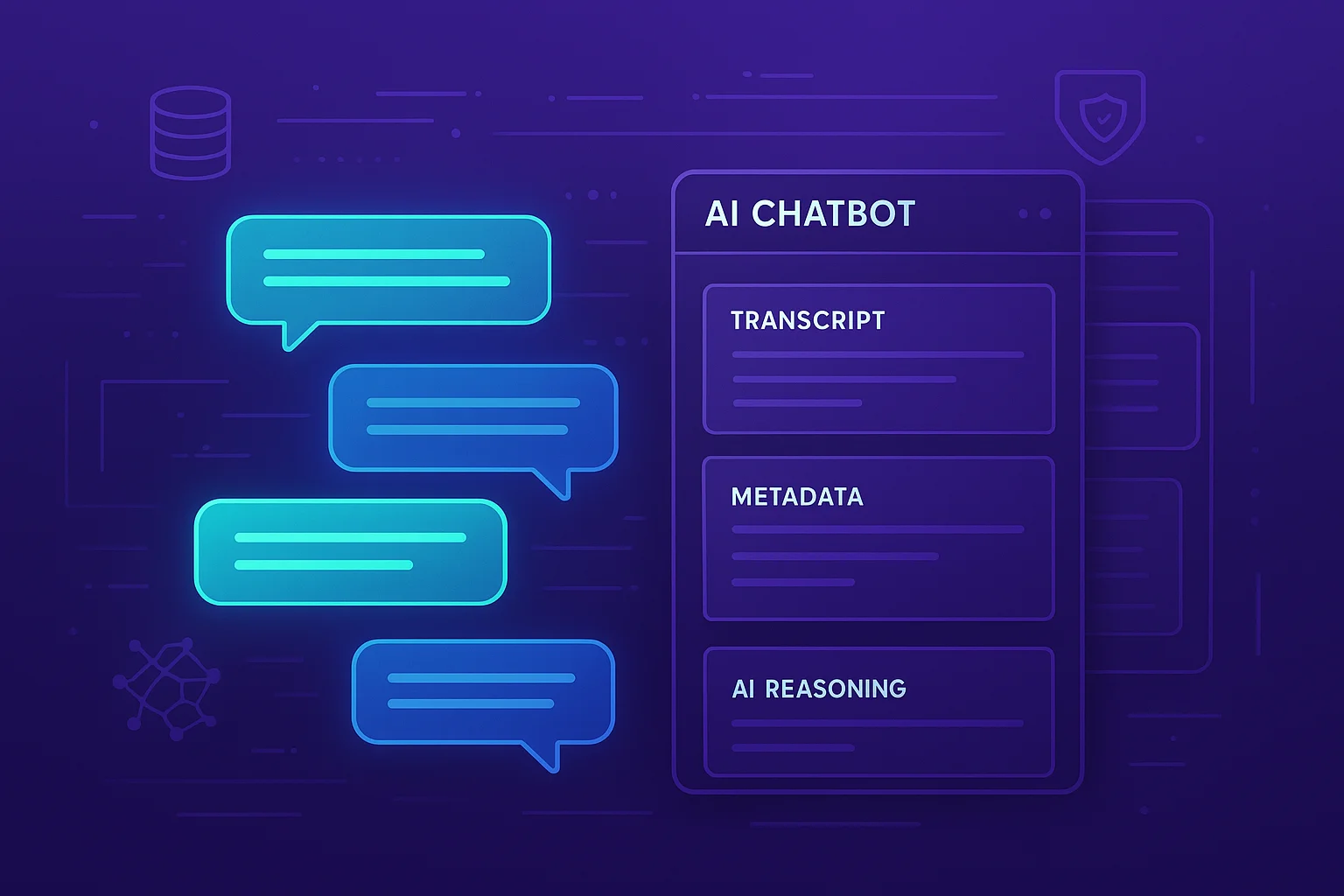

1. How are AI Chatbot Conversations Used and Stored by Providers?

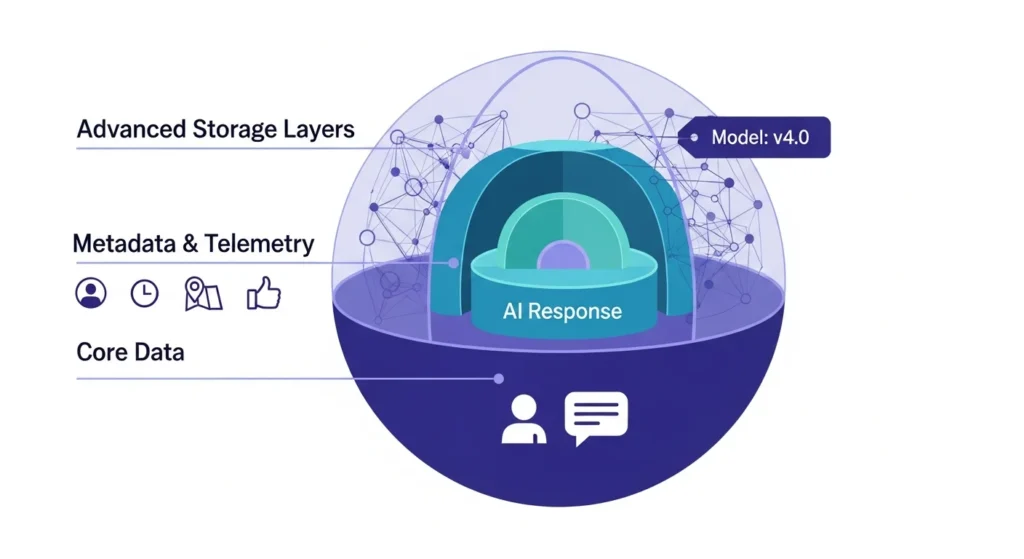

The core function of AI chatbots, particularly large language models (LLMs), relies heavily on vast datasets, and user conversations are a primary source for refining these models.

The research unequivocally demonstrates that current practices involve significant data collection and retention.

1.1. Default Usage for Model Training

A critical finding from a Stanford analysis of six leading AI chat platforms in 2025 revealed that 100% of them use user-provided chat data to train or improve their models by default.

Major providers such as OpenAI, Google, and Anthropic actively leverage user interactions to fine-tune AI capabilities, learn from new information, and correct errors.

This means that, unless explicitly opted out, any prompts, questions, or even file uploads provided to a public chatbot may be collected and retained for the purpose of improving the AI’s performance.

Anonymous data is theoretically more secure, but the ability to truly anonymize complex conversational data is often challenging, especially when combined with other user data.

Further, some vendors explicitly state in their privacy documentation that human reviewers may read transcripts.

1.2. Data Retention Policies and Indefinite Storage

The duration for which conversations are stored is a significant area of concern. Research indicates that many AI chatbot providers retain these interactions indefinitely or without clear limits.

This long-term retention poses considerable privacy risks, as data that may seem innocuous today could become sensitive in the future, particularly if cross-referenced with other data points.

The opaqueness of these retention policies contributes to user distrust and complicates compliance with data protection regulations that might mandate specific retention periods.

For example, during a copyright lawsuit, a U.S. court ordered OpenAI to preserve all ChatGPT logs, including “deleted” chats, indefinitely, highlighting how legal processes can override user intentions for data deletion.

OpenAI itself warned that this preservation order compromised user privacy promises.

1.3. Aggregation with Other Personal Data

The issue is compounded by the potential for chatbot inputs to be combined with other personal data collected by multi-product companies.

If an AI chatbot provider also offers search engines, social media platforms, or other services, it can merge conversational data with information from these other touchpoints.

This creates a more comprehensive, and potentially more invasive, profile of users. For instance, a casual query about “heart-friendly recipes” could, within such an aggregated system, inadvertently tag a user as a health-risk individual.

This practice of cross-referencing and data aggregation often occurs without explicit user awareness or consent, contributing to a sense of “blurred boundaries” in data usage.

1.4. Transparency Deficits

A recurring theme in the research is the lack of transparency in AI chatbot privacy policies.

These policies are frequently described as confusing, buried in legal jargon, and lacking clarity on specific retention limits or who can access the data.

This opacity prevents users from making truly informed decisions about sharing their data.

The absence of clear, concise, and accessible information about data handling practices is a major driver of public distrust in AI firms, a distrust that reached 91% of consumers in 2025 who believe AI companies misuse personal data.

2. Are AI Chatbot Conversations Private and Secure?

Despite the human-like interaction style that often leads users to perceive chatbot conversations as private, the reality is that they are not inherently private or secure.

The research consistently highlights significant vulnerabilities and a fundamental lack of legal and technical safeguards.

2.1. Lack of Legal Confidentiality

Perhaps the most crucial point for users to understand is that, unlike conversations with lawyers or doctors, what you tell an AI chatbot is not protected by legally binding confidentiality.

There are currently no industry-wide regulations that enforce such confidentiality for AI interactions.

This means that personal information shared with a chatbot, including highly sensitive data, lacks the legal protection afforded to privileged communications.

As one expert succinctly put it, users should “never share anything with a chatbot you wouldn’t post publicly.”

This stark reality contrasts sharply with user expectations, as many users mistakenly feel their chats are private due to the conversational interface.

2.2. Human Access to Conversations

Even when data is ostensibly used for model training, human employees or contractors can access user conversations.

Meta, for instance, has had human contractors reviewing AI chats, exposing them to raw user details including health and financial records.

OpenAI itself has acknowledged that chats are monitored for abuse and may be reviewed by trainers, even after implementing an opt-out mode for training data.

This human-in-the-loop review further diminishes any perceived privacy, making the content of conversations vulnerable to human error, negligence, or malicious intent.

2.3. Risks of Data Breaches and Misconfigurations

The archiving of conversational data creates new attack surfaces and points of vulnerability.

Several high-profile incidents underscore these risks: In March 2023, a ChatGPT bug exposed conversation data of approximately 1.2% of ChatGPT Plus subscribers active during a 9-hour window.

This leak included titles of strangers’ chat histories and, in some cases, personal details such as names, emails, and partial credit card numbers.

OpenAI temporarily disabled chat history features and apologized for the breach. In 2025, Elon Musk’s “Grok” chatbot inadvertently exposed up to 300,000 user conversation, which were publicly indexed by search engines due to a configuration error.

This meant sensitive user queries became searchable online, highlighting how improper storage or oversight can turn private chats into public data.

A European fintech company suffered a significant lapse in 2025, accidentally logging sensitive chatbot conversations (including account numbers and passwords) to an unencrypted, publicly accessible analytics dashboard.

This went unnoticed for weeks, illustrating how human error and misconfiguration, rather than sophisticated cyberattacks, can lead to severe data exposure.

These incidents demonstrate that even prominent tech companies are not immune to security flaws that can expose highly sensitive conversational data.

2.4. Shadow AI and Insider Threats

The rapid adoption of AI chatbots in the workplace has introduced a new form of insider threat: “shadow AI.” Employees, eager to leverage AI for productivity, often paste confidential company information into public chatbots without realizing the implications.

Samsung experienced this firsthand in 2023, with three incidents of employees leaking confidential data, including source code and meeting notes, to ChatGPT within 20 days of allowing its internal use.

Since these public AI models train on user inputs, Samsung effectively handed over its secrets to OpenAI.

This led Samsung, along with companies like Apple and JPMorgan, to ban external AI bots for employee use.

A 2025 report indicated that nearly 50% of enterprise employees use AI tools for work, often inputting sensitive data, creating a security blind spot for traditional data loss prevention systems not designed for AI API calls.

3. What are the Benefits of Archiving Chatbot Conversations for Businesses and Users?

Despite the substantial privacy and security risks, archiving chatbot conversations offers considerable benefits that drive their widespread adoption and continued use.

For both businesses and users, these archives serve several valuable purposes.

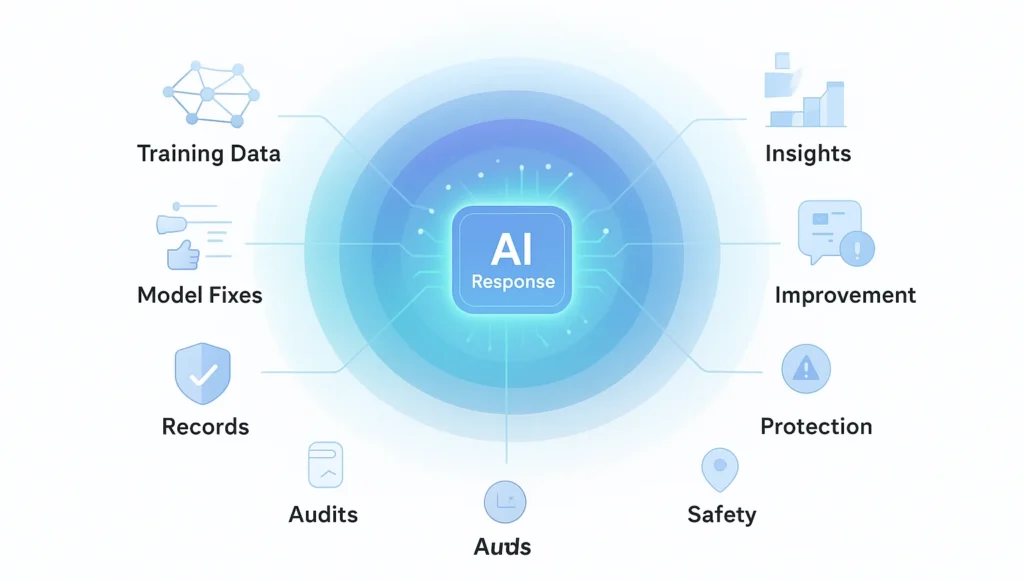

3.1. Continuous AI Learning and Improvement

The primary benefit for AI providers is the ability to enable continuous learning and improvement of their models.

Every user interaction provides valuable data for training, allowing AI systems to refine their responses, expand their knowledge base, and become more accurate and helpful.

This creation of a “virtuous cycle”—more data leading to better performance, which attracts more users and generates more data—is fundamental to the rapid advancement of AI.

Businesses deploying their own chatbots similarly rely on archived conversations to fine-tune their AI for higher accuracy, ensuring that bots correctly understand user intent and provide relevant information.

This training data helps fix weaknesses, such as inappropriate or incorrect answers, and improves the overall user experience.

3.2. Personalized User Experiences and Contextual Memory

Archived conversations enable AI chatbots to offer personalized and context-aware interactions.

By “remembering” past exchanges, chatbots can pick up where a conversation left off, reference previous inquiries, or recall personal preferences.

This avoids frustrating repetition and allows for a more seamless and human-like interaction.

For example, a customer service chatbot can access previous order details or support tickets, leading to faster and more efficient resolutions.

Consumer AI services like ChatGPT also offer chat history syncing across devices, allowing users to revisit answers and maintain continuity .

This convenience is a significant selling point, with 60% of consumers stating that chatbots influence their purchasing decisions due to helpful, tailored suggestions.

3.3. Quality Control and Compliance

In many regulated industries, retaining transcripts of AI-driven interactions is crucial for quality assurance and compliance.

Just as human customer service calls are recorded, AI chat logs can be audited to ensure accuracy, compliance with regulations, and adherence to company policies.

This is particularly important in sectors like finance and healthcare, where AI might provide advice or handle sensitive transactions.

Archives serve as a liability trail, documenting decisions made or information provided by the AI, which can be essential for legal defensibility in case of disputes or errors.

Additionally, these logs aid in training human staff, allowing them to learn from successful chatbot resolutions or identify areas where the bot requires human intervention.

3.4. Insight Generation and Business Intelligence

Archived conversations are a rich source of business intelligence.

By mining chat logs, companies can identify customer pain points, understand common queries, and gauge sentiment.

Text analysis on these archives helps product and marketing teams uncover insights directly from the “voice of the customer,” without needing separate surveys.

For instance, frequently asked questions about a specific product feature can signal a need for improved documentation or a product redesign.

The ability to aggregate thousands of queries allows businesses to detect trends, automate FAQs, and gain a deeper understanding of their customer base.

This “conversation intelligence” contributes significantly to customer experience improvements and strategic decision-making.

3.5. Operational Efficiency and Cost Savings

The integration of AI chatbots, supported by their archived knowledge, yields substantial operational efficiencies and cost savings. Chatbots are projected to handle 85% of all customer service interactions by 2025, saving businesses approximately $8 billion per year in support costs.

Companies typically report a 30-40% reduction in customer service expenses through chatbot implementation.

These efficiency gains are often directly tied to the ability to train bots with large conversation datasets and maintain logs for continuous learning, allowing 24/7 service continuity and instant answers.

This demonstrates why firms are eager to archive chats, provided they can mitigate the associated risks.

4. How are Regulators Responding to AI Chatbot Privacy Concerns?

Governments and regulatory bodies globally are increasingly scrutinizing AI chatbot practices due to mounting privacy concerns.

The response ranges from temporary bans and significant fines to the development of comprehensive new legislative frameworks.

4.1. Italy’s Groundbreaking Actions

Italy has been at the forefront of regulatory action. In March 2023, Italy’s data protection authority (Garante) issued a temporary ban on ChatGPT, citing unlawful data processing and a lack of transparency.

The Garante highlighted OpenAI’s failure to establish a legal basis under GDPR for collecting and processing user data, particularly the data of minors, which it found to be harvested without age verification or parental consent.

This unprecedented ban forced OpenAI to swiftly implement new measures, including age verification, clearer privacy policies, and an opt-out mechanism for data sharing.

Following these changes, the ban was lifted in April 2023.

Building on this, in December 2024, Italy’s Garante fined OpenAI €15 million for GDPR violations, specifically for its unlawful collection of personal data and failure to fulfill transparency requirements.

These actions signal a strong willingness by regulators to enforce existing data protection laws on AI companies and hold them accountable for privacy lapses.

4.2. The EU AI Act and Broader European Scrutiny

The European Union is developing the EU AI Act, anticipated to be fully effective around 2026. This landmark legislation aims to categorize AI systems by risk level, imposing stricter transparency and safety requirements, particularly for “high-risk” AI applications.

While the specifics are still being finalized, it’s expected to significantly impact how AI chatbot providers handle data, potentially mandating privacy-by-design principles, comprehensive data impact assessments, and clear explanations of AI decision-making.

Inspired by Italy’s actions, other European regulators, as well as Canada, have launched their own investigations into AI chatbot data practices, indicating a growing global momentum towards stricter oversight and the imposition of a “privacy by design” standard for AI development.

4.3. Patchwork Regulation in the United States

In contrast to the EU’s comprehensive approach, the United States currently lacks a federal privacy law that specifically addresses AI chatbot data.

Enforcement primarily relies on a patchwork of state-specific privacy laws (like CCPA in California) and oversight by bodies like the Federal Trade Commission (FTC).

This disparity means U.S. users often rely on inconsistent state protections, which can lead to regulatory gaps and make it challenging for companies to ensure compliance across different jurisdictions.

4.4. International Divergence

The global regulatory landscape is diverse. Countries like China, for example, have strict content regulations and often require companies to store chat data locally, making it subject to government access.

This highlights how privacy expectations and regulatory demands vary significantly across different regions, creating a complex compliance environment for global AI service providers.

Overall, regulatory responses are pushing AI companies towards greater transparency, better consent mechanisms, and more robust data protection measures.

The trend suggests that businesses operating AI chatbots must proactively embed privacy and security safeguards or face significant legal, financial, and reputational repercussions.

5. How can Users and Organizations Protect their Privacy with AI Chatbots?

Given the privacy pitfalls and security risks associated with AI chatbot conversation archives, both individual users and organizations must adopt proactive measures to protect sensitive information.

This involves a combination of informed caution, leveraging available privacy features, and implementing robust internal policies.

5.1. For Individual Users: Be Proactive and Informed

- Assume Nothing is Confidential: The most crucial user advice is to treat all AI chatbot interactions as potentially public. “Never share anything with a chatbot you wouldn’t post publicly” is a wise mantra. Do not input highly sensitive personal, financial, health, or proprietary information into public AI chatbots.

- Utilize Opt-Out and Incognito Modes: Many leading AI chatbots, spurred by regulatory and public pressure, now offer options to disable chat logging for model training. OpenAI introduced an “incognito mode” in April 2023, allowing users to interact with ChatGPT without saving conversations to history or using them for training. Users should actively seek out and enable such privacy-enhancing features.

- Review and Manage Chat History: Regularly review your chat history with AI services. Understand options for deleting individual conversations or your entire chat history. While deletion may not guarantee permanent erasure from all provider servers (especially if legal holds are in place), it reduces exposure. Some platforms also offer tools to download your data, promoting transparency.

- Understand Privacy Policies (the Gist of it): While often dense, try to grasp the basic tenets of a chatbot’s privacy policy. Pay attention to clauses about data retention, data sharing with third parties, and how data is used for model training.

- Be Wary of AI Memory: Some chatbots develop a “memory” of past interactions to enhance personalization. While convenient, this means historical data can inform future responses. If you’re concerned about this, understand how to manage or disable these memory features, often found in privacy settings.

5.2. For Organizations: Implement Privacy by Design and Robust Policies

Organizations leveraging or considering AI chatbots, especially for internal or customer-facing operations, need a comprehensive strategy:

- Adopt Privacy-by-Design and Data Minimization: Integrate privacy protections from the outset. This means collecting only the data essential for the chatbot’s function and actively anonymizing or redacting Personally Identifiable Information (PII) from chat logs before storage. Consider session-based memory models where chats are not persistently stored unless a user explicitly opts in or logs in, as demonstrated by Klarna’s successful revamp.

- Clear Transparency and User Consent: Implement clear and concise privacy notices at the start of chatbot interactions, informing users how their data will be used, stored, and for how long. Provide accessible mechanisms for users to opt-out of data collection for training, manage their data, and request deletion. Transparency can build trust and become a competitive advantage, as 75% of consumers will not buy from companies they distrust with data.

- Strong Security Controls and Data Governance:

- Encryption: Ensure all stored chat logs are encrypted at rest and in transit.

- Access Control: Implement strict role-based access controls, limiting who can view or query chat archives to only authorized personnel. Monitor access logs for unusual activity.

- Retention Policies: Establish clear, legally compliant data retention schedules. Automatically delete or fully anonymize chat records once their purpose has been fulfilled and legal obligations met. This limits the “blast radius” in case of a breach.

- Regular Audits: Conduct regular security audits and penetration tests to identify and rectify misconfigurations or vulnerabilities in chatbot infrastructure.

- Mitigate Shadow AI:

- Employee Education: Provide mandatory training to all employees on the risks of using public AI chatbots with company data. Clearly instruct them on what information can and cannot be shared.

- Approved Internal Solutions: Deploy secure, enterprise-grade AI chatbot solutions (e.g., ChatGPT Enterprise/Business, Microsoft Azure OpenAI), where data remains within the organization’s control and is explicitly not used for external model training. This helps channel employee AI usage into safe environments.

- Data Loss Prevention (DLP): Update DLP systems to monitor and prevent sensitive company data from being pasted into unauthorized AI interfaces (e.g., public web-based chatbots).

- Vendor Due Diligence: When using third-party chatbot providers, rigorously evaluate their privacy and security practices, data handling agreements, and compliance certifications. Ensure contracts specify data ownership, usage limitations, and incident response procedures.

By proactively implementing these measures, both individuals and organizations can navigate the complexities of AI chatbot conversation archives, maximizing their benefits while effectively safeguarding privacy and minimizing risks.

In the continued dance between technological advancement and ethical responsibility, an informed, cautious, and strategic approach is paramount.

The intricate relationship between the benefits and risks of AI chatbot conversation archives will continue to evolve, especially as regulations mature and technological solutions for privacy-preserving AI advance.

Related Posts

Passive delivers hands-on SaaS and software reviews along with affiliate marketing insights to help entrepreneurs choose the right tools and build profitable online businesses with confidence.